An IBM Q Network collaborator comes to visit

The global quantum community is a growing scientific space. Increasingly it is becoming a vibrant business community. Q-CTRL is happy to be one of the first quantum start-ups and as our technological capacity develops, so to does the broader community.

A central part of our success is being tied into this global network. So we are always happy when friends drop by from distant and exotic lands, like Canada.

Randomized benchmarking of quantum systems

Fresh on the heels of teaming up with IBM, Q-CTRL this week had a visit from Professor Joseph Emerson, the founder of Canadian company, Quantum Benchmark, another of the start-ups that has joined Big Blue's Q Network.

As well as running a quantum start-up, Joseph Emerson is associate professor at the Institute for Quantum Computing at the University of Waterloo, Canada.

Quantum Benchmark is developing software that helps teams conduct performance validation of quantum hardware. That process relies on something called randomized benchmarking of quantum systems, through which a hardware operator can gain insights into the errors occurring on their physical devices.

What Q-CTRL offers is functionality that helps stabilise quantum hardware itself, dramatically driving down error rates. Demonstrating the functionality of our quantum firmware also uses randomized benchmarking as part of our toolkit to understand the relationship between noise and the logical errors it can produce.

So when our Senior Quantum Control Engineer Harrison Ball sat down with Associate Professor Emerson, they had a lot to talk about.

Good to have Joe Emerson from @Q_Benchmark at @Sydney_Science @ARC_EQUS talking #randomizedbenchmarking for #quantuncomputing pic.twitter.com/k3hTSgGZuL

— Michael J. Biercuk (@MJBiercuk) April 12, 2018

Dr Ball, who has a PhD in quantum physics from the University of Sydney, said; "The problem of dealing with noise and the resulting errors in implementing quantum operations is shared across all physical systems.

"In particular, there is a growing understanding that the nature of noise imprints itself characteristically on the resulting errors and this dependency must be appropriately incorporated into the benchmarking process."

Quantum systems are highly susceptible to electromagnetic interference, or noise, and this manifests as errors in those systems: quantum logic gates don't perform as we want them to. In general we want to minimize the errors in those systems so that we can do something useful with them.

So quantum theorists turn to the mathematical framework of "Quantum Error Correction." If we can achieve certain performance levels it's possible for Quantum Error Correction to take over, allowing us to build arbitrarily large quantum computers. In principle.

Those performance levels can be quite challenging and achieving error rates below the so-called "fault-tolerance threshold" has been a main driver for research in the community for years. Randomized benchmarking provides one technique to know if errors are meeting those requirements.

"Randomized benchmarking is a tool developed to evaluate how well your system implements what it's meant to," Dr Ball said. "Historically, this framework has been deployed using simplified assumptions about the type of underlying noise.

"However, these assumptions are not always valid in real systems, which face a variety of different noise processes. Our studies have led to a more general understanding of the mapping between particular noise types and characteristic errors."

So, noise produces errors ... but not all noise is the same.

In 2015 Dr Ball wrote a paper where he showed that different noise produces different sorts of signatures in randomized benchmarking. That paper was published in Physical Review A, and led to experimental validation of Harrison's theoretical insights published in Nature Quantum Information.

Looking at randomized benchmarking in a new way allows you to map noise processes responsible for the errors to the correlation characteristics of the errors themselves. This can provide a powerful tool to manage quantum hardware.

"Harrison's work was the inspiration for our work on error virtualization in quantum systems using quantum control," said Q-CTRL founder Professor Michael J. Biercuk. But that's a story for another day.

As Prof. Emerson pointed out during his visit, the fault-tolerance error threshold is only part of the story. We also need to worry about the statistical characteristics of the errors; i.e. whether they are totally random or share common attributes in time and across many qubits.

And so performance evaluation tools also face the subtle challenge of not just measuring how likely errors might be, but also what their so-called correlation properties are.

Standard quantum error correction assumes that all errors are due to Markovian noise. This just means the noise is totally random, like static on the radio. However, not all noise behaves this way.

Dr Ball said these differences affects the type of errors you get, and in turn what signatures appear in randomized benchmarking. Such subtleties formed a big chunk of discussion with Professor Emerson at Q-CTRL this week.

"There is a lot of overlap and a big part of our conversation was trying to understand how different communities describe the noise they experience in quantum systems and whether the results they get map onto our model, and vice versa.

"We're all motivated to unify our understanding of these pathologies in performance validation protocols like randomized benchmarking. Moreover, understanding how to deploy this knowledge in building real quantum technologies is providing exciting new opportunities.

"Approaching these problems through the framework of a quantum start-up like Q-CTRL gives these conversations a sharper focus," Dr Ball said.

These signatures of noise correlations in error characterization routines like randomized benchmarking were first experimentally tested using trapped-ion qubits at the University of Sydney's quantum control laboratory, run by Professor Biercuk.

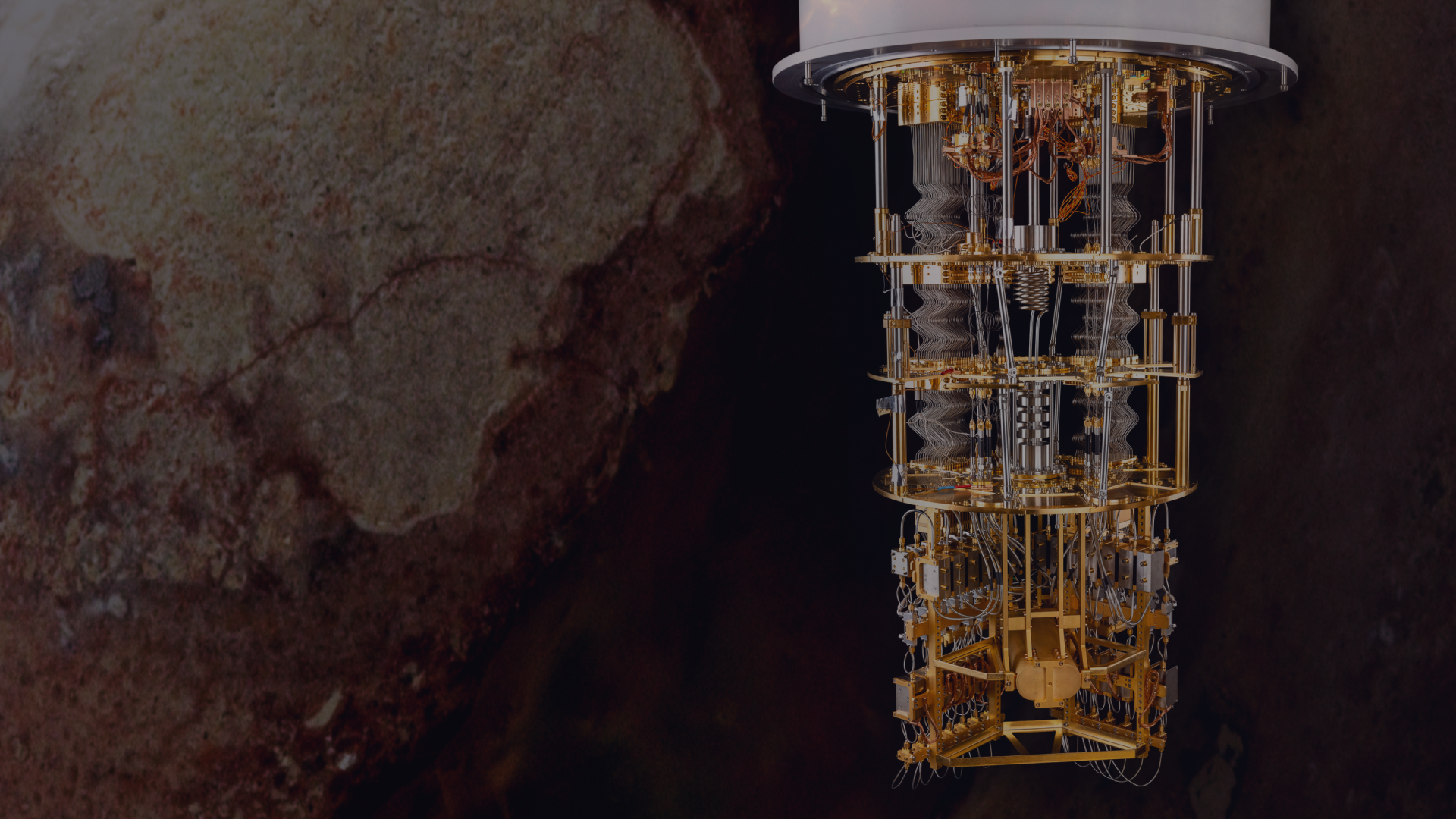

"Access to the Q Network could provide a huge opportunity to run further tests using IBM's superconducting qubit architecture and allow us to explore our ideas on another system," Dr Ball said.